MuleSoft has recently launched a major update of their fully managed Mule runtime engine cloud service. It significantly improves application performance and scalability with lightweight container-based isolation and simplified deployment experience to the Cloud.

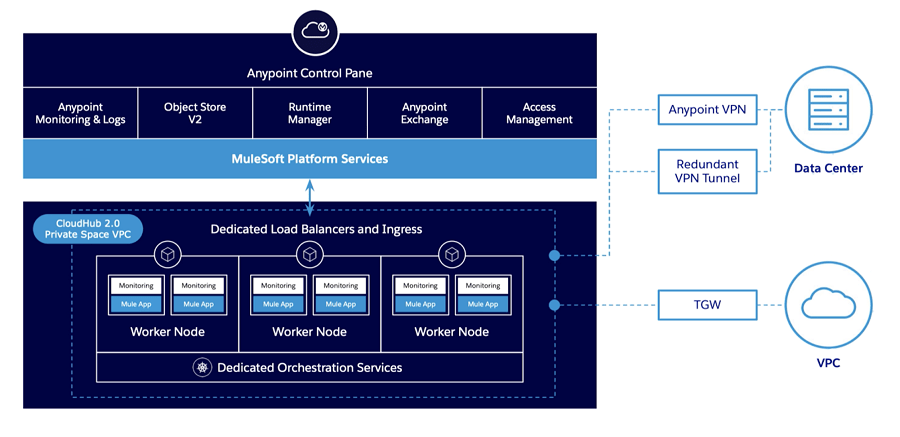

CloudHub 2.0 (CH2.0) leverages an orchestrated container platform and service-oriented architecture based on Kubernetes, similar to the RTF architecture.

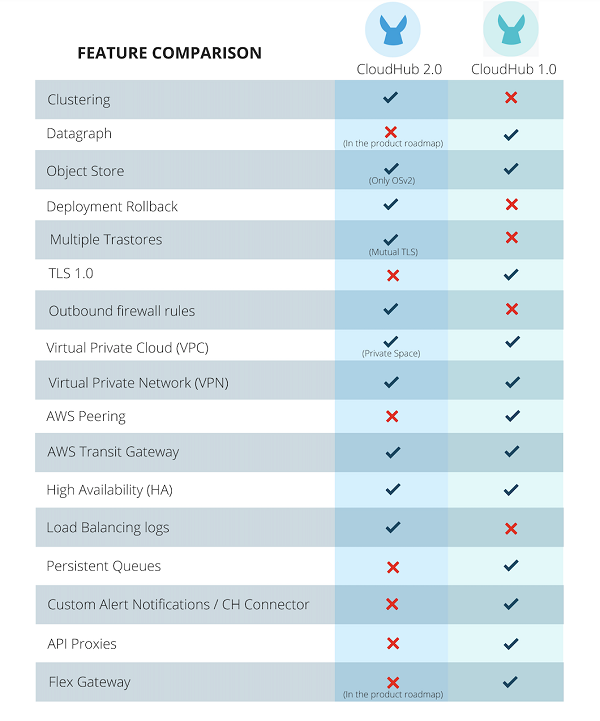

What's New in MuleSoft CloudHub 2.0

Let's familiarise ourselves with the new concepts and terminology introduced by the latest version:

- CloudHub 2.0 introduces the concept of Shared and Private space. The Shared space is where you run your MuleSoft applications in a multi-tenant mode that doesn't require connecting to the downstream systems within your organisation's network. Private Spaces can be seen more as an evolution of VPC in CloudHub 1.0 (CH1.0). It is an isolated logical space to run your applications that need to connect to an external network or your data centre via Anypoint VPN or a transit gateway (AWS Peering is no longer supported). Custom domains and mutual TLS configuration are now provided at the private space level.

- Every Private space has its load balancer (assigned automatically) for inbound traffic, called Private Ingress. It auto-scales to traffic, removing the concept of a dedicated load balancer from CH 1.0. It also allows downloading its traffic logs from the Runtime Manager.

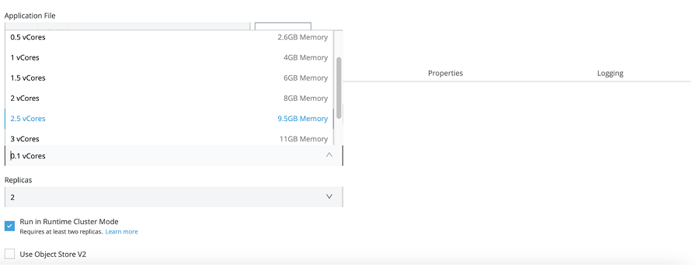

- With the introduction of clustering support in CH 2.0, you will run your apps across multiple replicas to enable scaling (horizontal and vertical), load balancing, and high availability. For those familiar with CH 1.0, this replaces work size and work numbers in the application configuration.

Starting with CloudHub

If you are only starting with MuleSoft Runtime, you should deploy your applications directly to CloudHub 2.0 runtime to take advantage of the simplified experience of deploying and running MuleSoft applications in the Cloud. Here are some of the latest benefits that many customers were missing in CloudHub 1.0:

- The ability to enable the Clustering option and leverage replica scale-out to provide horizontal scalability and reliability to your applications. In CloudHub 1.0, there wasn't a real concept of clustering. You could only achieve HA via the load balancer and deploying your application across multiple workers to add reliability and use persistent queues to distribute workloads across a set of workers.

- A more granular resource capacity for the app to process the data. You can now have the option to assign 0.5, 1.5, 2.5, 3.5 and 4 vCores (on top of the current vCore levels) to your applications without jumping from 0.2 to 1vCores in CH1.0 if your application requires more processing power.

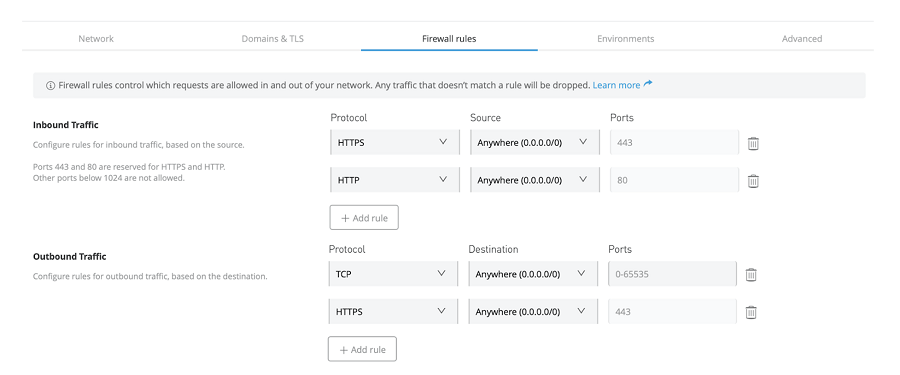

- In addition to the firewall rules for Inbound traffic, Outbound firewall rules have been introduced at the private space level (but not at an application level). This feature wasn't available in CloudHub 1.0, you could only configure inbound traffic via the Dedicated LB, and that's it!

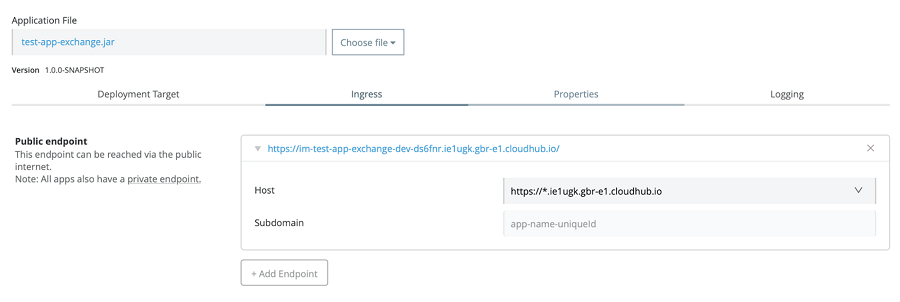

- Better control of the traffic. In CH1.0, in order to restrict external access to only the External Experience Layer APIs in your customer-owned VPC, it would require setting up two DLBs. One DLB serves internal requests from within the VPC, and the other listens to requests coming from the public internet, alternatively, to compromise on HA for the internal traffic by utilising a single DLB only exposed to the outside world. They made life so much easier in CH 2.0 by creating a public and private endpoint by default when deploying your application. The public endpoint in a private space can be easily deleted to block the traffic from the internet accessing your application if needed (that's always the case for Sys and Proc APIs). Private Endpoints are now always accessible from within the private networks via the Ingest Load Balancer and never by hitting the application directly, without compromising on HA.

Migrating to CloudHub 2.0

If you already have applications deployed to a VPC in CH1.0, consider the following before switching to CloudHub 2.0:

- VPC Peering and Direct Connect available in CH 1.0 have been deprecated in CH 2.0 in favour of Transit Gateway attachments. VPN is still an option and now provides HA out-of-the-box.

- Only port 8081 is used in CH 2.0 for your application's HTTP or HTTPS traffic. It might require changing the port configuration in your application's listeners.

- Inbound and Outbound Static IPs (2-3 depending on the region availability of Multi-AZ zones) are now provisioned at the Private Space level and not allocated at the application level as in CH 1.0. This means applications deployed in the same Private Space will use the same set of Inbound and Outbound static IPs. If you have the requirement to have unique static IPs per application, this would require using a different private space. I can see someone might not like this combo!

- It might be time to upgrade your applications to the latest runtime version, as CH2.0 only supports Runtime versions 4.3.0 and 4.4.x.

- If your applications were utilising the Persistent queues feature for VM queues for the purposes of load distribution to share payloads across different workers. MuleSoft recommends replacing them with Anypoint MQ or other message brokers, as Persistent queues are not supported anymore.

- There have been some significant changes in how schedulers are managed in CH2.0. You can now set a specific timezone in the Cron Scheduler, opposite to always using UTC as in CH1.0. The scheduler runs on demand or can be enabled/disabled, resulting in a restart of the application. In CH 1.0, users can apply changes on the fly.

- Rewrite rules set up in the Dedicated Load Balancer in CH 1.0 is not currently supported in the Ingress controller in CH2. When this option becomes available, you must manually migrate all your existing DLB mapping to the Ingress Path Rewrite rules.

- Application Insights for your application in Runtime Manager are not supported in CloudHub 2.0. MuleSoft recommends using the Anypoint Monitoring component for tracking business transactions instead. Alongside Custom Notifications, which are no longer supported.

- Last but not least, your CI/CD might require minor tweaks, such as upgrading the Maven plugin to version 3.7.1 or above and publishing your deployable artefacts to Anypoint Exchange before the runtime deployment.

- Despite deciding to switch to CH 2.0, you can still choose which version you want to deploy your applications in Runtime Manager and gradually approach your application migration strategy for CH 2.0.

Conclusions

Unfortunately, there is no automatic option to migrate your existing infrastructure (VPC/VPN/DLB) from CloudHub 1.0 to CloudHub 2.0. Therefore, you would need to create and configure a new private space, set up a new VPN, and update your applications, as discussed above, before deploying them to the new runtime.

I strongly recommend that existing CH1.0 customers thoroughly assess their architecture and applications to ensure a smooth transition to CloudHub 2.0.

.png)