It had been coming for a long time…

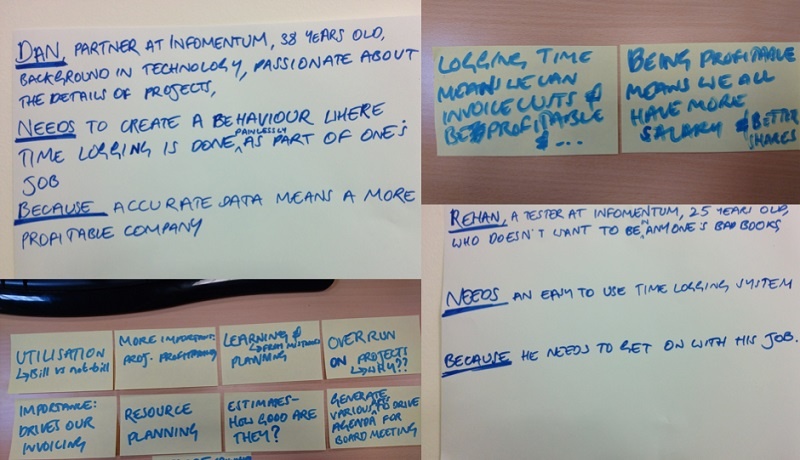

At the end of every month, developers scrambled to complete their timesheets, while the operations team were breathing down their necks, threatening them with sticks to get it done - and done right. The developers were left wondering why operations wanted their time logs so desperately. Surely they already knew what they had been working on? The operations team were scratching their heads as to why the developers didn’t do it; they had been reminded at the end of every month like clockwork, yet every month the same story. It was hugely frustrating for both sides, and was approaching boiling point. As soon as the Customer Experience team had a break between projects, we knew this was a top internal issue that had to be solved.